Technical SEO for AI Search: A Guide to LLM Discoverability

Optimize your technical SEO for the age of AI. Learn how to structure your content, implement schema, and build trust signals to become a discoverable and authoritative source for Large Language Models (LLMs) and drive new visibility in AI-powered search.

The playbook for search engine optimization is undergoing a significant paradigm shift. For years, achieving high search rankings meant meticulously optimizing for page-level performance. Today, success is increasingly measured by your content's direct discoverability and authoritative citation within Large Language Models (LLMs) and AI-powered answers. Simply ranking on a Search Engine Results Page (SERP) no longer guarantees your expertise will be consumed or referenced by the AI systems shaping information access. If your content is not structured for machine readability and verifiable trust, it risks being overlooked in the new era of intelligent search.

This is not merely an update; it requires a new technical SEO approach. The future of search visibility hinges on optimizing your digital presence to be directly discoverable and authoritative for Large Language Models.

In this guide, we will help you navigate this pivotal shift. We will clarify the distinction between traditional search rankings and the new frontier of AI answers, emphasizing the move toward passage-level citations. We will then dive deep into ensuring your site’s foundational technical SEO—speed, crawlability, and mobile-friendliness—is robust for AI crawlers. You will learn to leverage structured data to communicate context with precision. Furthermore, we will strategize on building verifiable E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness) signals that command AI trust, and we will outline advanced content architecture techniques designed for optimal machine readability. Prepare to refine your SEO strategy and secure AI search visibility for your enterprise.

TL;DR

AI is the new search engine. Your goal is no longer just to rank, but to be cited in generative answers. Optimizing for AI search involves making your content technically sound, structurally clear, and contextually rich for LLMs. This paradigm shift prioritizes passage-level inclusion over page rankings, demanding a strategic focus on structured data and demonstrable expertise for crawlers like GPTBot. Success now depends on how easily an AI can parse, trust, and reference your information.

Analysis from LinkGraph (2024) confirms LLMs evaluate content on clarity, accuracy, and entity recognition more granularly than traditional crawlers, mandating a more deliberate technical strategy.

- Technical Foundations: Perfect site speed, mobile-friendliness, and crawlability as your baseline.

- Structured Data: Deploy Schema markup to explicitly define entities and relationships.

- Content Architecture: Use clear headings, short paragraphs, and Q&A formats for easy parsing.

- E-E-A-T Signals: Embed verifiable author credentials and citations to build trust.

Your objective is to position your content as the most logical, authoritative, and easily citable source for an AI to use in its responses.

The Paradigm Shift: From Search Engine Rankings to AI Answers

The digital landscape is undergoing a significant transformation, moving beyond traditional search engine results pages to an era dominated by synthesized AI answers. This fundamental shift demands a strategic re-evaluation of how your content achieves visibility and authority online.

For years, SEO focused on optimizing content for search engine crawlers to secure top rankings for entire web pages, driving traffic through direct clicks. However, the advent of sophisticated Large Language Models (LLMs) like GPTBot and ClaudeBot ushers in a new paradigm. Your content must now be optimized for AI systems that synthesize information from diverse sources to generate comprehensive, direct answers, often without a user ever visiting your site. This evolution necessitates a shift from aiming for clicks on a ranked list to optimizing for authoritative citation within a generated AI response.

This transition elevates the importance of context, entities, and trustworthiness over traditional keyword density and backlinks, as highlighted by analyses from Exploding Topics (2024) and Codal Insights (2023). This divergence in how information is discovered and presented demands a new strategic framework. To become indispensable in this AI-driven search era, understanding these core distinctions is paramount.

| Aspect | Traditional SEO | LLM Optimization (LLMO) |

|---|---|---|

| Optimization Level | Page-level optimization | Passage-level optimization |

| Primary Focus | Keywords, backlinks, ranking signals | Context, entities, intent, trustworthiness |

| Visibility Goal | #1 ranking on SERP for clicks | Citation and inclusion in AI-generated answers |

| Key Performance | Click-Through Rate (CTR) | Mention rate, citation rate, assistant-driven visibility |

This distinct operational model introduces new performance signals, where assistant-driven visibility and engagement become critical metrics for measuring content effectiveness.

The rise of AI-powered search requires a strategic evolution from optimizing for clicks on a ranked list to optimizing for citation within a generated answer.

Technical Foundations: Is Your Site Ready for AI Crawlers?

Before your content can be cited by large language models, it must first pass a fundamental technical audit. AI crawlers, much like their traditional search engine counterparts, have little tolerance for digital friction, and a technically sound website is the prerequisite for AI discoverability.

LLMs cannot reference content they cannot efficiently access and parse. AI crawlers like GPTBot operate with finite resources, making site performance and accessibility primary filters for inclusion in their data sets. A technically deficient site introduces ambiguity and processing overhead, signaling to AI systems that the content may be unreliable or low-value. This foundational layer dictates whether your content is even considered for training datasets or real-time sourcing in generative answers.

According to research from Codal Insights, pages that load in under two seconds are significantly more likely to receive a full content analysis, whereas slower pages risk only partial crawls. This directly impacts the depth of information an AI can extract from your domain. A machine must be able to easily parse, understand, and trust your content; if it cannot, visibility will suffer.

To ensure your site is prepared for this new wave of crawlers, prioritize these four technical pillars:

- Optimize Crawler Directives. Your

robots.txtfile and XML sitemap are the primary navigational tools for AI bots. Ensure yourrobots.txtis not inadvertently blocking essential resources or crawlers and that your sitemap is accurate and comprehensive. These files provide an explicit, efficient roadmap for AI to discover and index your most valuable content. - Amplify Core Web Vitals. AI crawlers operate on constrained processing budgets. Slow load times, layout shifts, and poor interactivity directly impede their ability to parse your content. Every millisecond saved contributes to a more comprehensive crawl, increasing the likelihood that your entire page—not just the initial viewport—is analyzed.

- Engineer a Logical Site Architecture. A coherent structure with clear navigation and strategic internal linking is crucial for establishing context. This hierarchy helps AI models understand the relationships between different pieces of content, allowing them to map your domain's expertise and recognize your authority on a given topic.

- Guarantee Server-Side Rendering (SSR). Many AI crawlers struggle to execute complex client-side JavaScript, meaning content that requires extensive rendering may be missed entirely. Prioritizing server-side or static rendering to deliver fully-formed HTML is the most direct and reliable method to guarantee your content is fully accessible.

A technically optimized and performant website is not merely a best practice; it is the foundational requirement for your content to be discovered, indexed, and ultimately trusted by AI language models.

Structured Data: Speaking the Native Language of LLMs

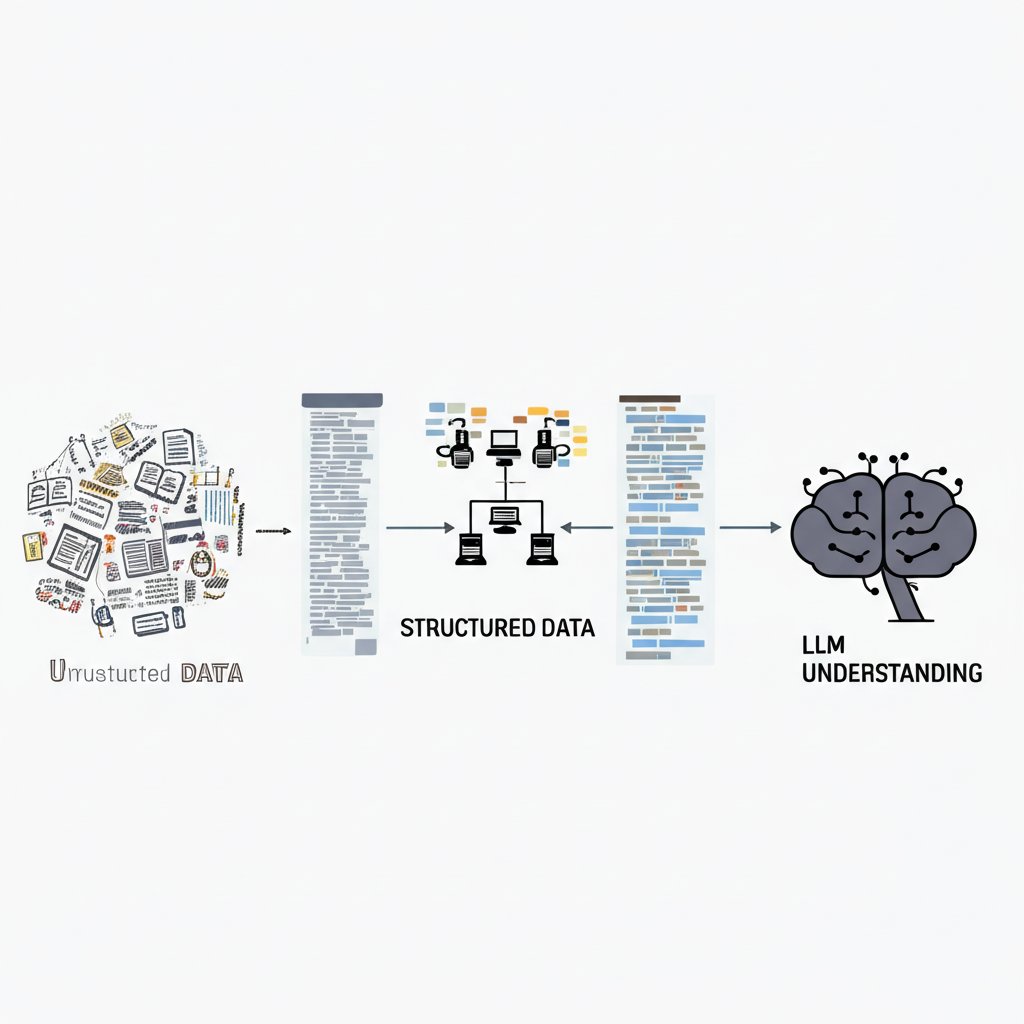

To be discovered by AI, your content must do more than just exist; it must communicate its meaning explicitly. Large language models process information, but they lack the intuitive context humans possess, making direct translation essential.

This is where structured data, specifically Schema markup, becomes a critical strategy. It is a vocabulary that adds a machine-readable layer of context directly to your code, transforming ambiguous text into a clear, relational dataset. This allows AI engines to parse, understand, and trust your content's purpose and authority with greater precision, moving beyond simple keyword matching. Analysis from Codal Insights (2024) confirms that proper schema implementation is a critical factor in how AI engines trust and recommend content for generative answers.

| Schema Type | Primary Use Case | Impact on LLMs |

|---|---|---|

Organization |

Defines your brand entity, logo, and contact information. | Builds foundational authority and helps the AI attribute facts and expertise to the correct source. |

Article |

Specifies authorship, publication dates, and headlines. | Provides essential metadata for proper citation, assesses content timeliness, and verifies authorship. |

FAQPage |

Structures discrete question-and-answer pairs. | Directly feeds the model's conversational format, making your content a prime source for direct answers. |

HowTo |

Outlines step-by-step instructions for a process. | Offers procedural knowledge in a logical format that AI can easily re-sequence and present to users. |

Implementing robust and accurate Schema markup is the most effective technical strategy for translating your content's meaning into a format that LLMs can readily understand and cite.

E-E-A-T as a Verifiable Signal for AI Trust

While human evaluators have long assessed your content's quality, AI models now perform a similar—yet far more programmatic—audit. For an LLM, E-E-A-T is not an abstract concept but a collection of verifiable data points that signal whether your content is a trustworthy source.

AI engines must have confidence in a source before citing it in a generated response. They achieve this by cross-referencing information and looking for explicit, machine-readable indicators of credibility. This strategic shift means your on-page elements must be optimized not just for users, but for algorithmic verification. Research from Exploding Topics (2024) confirms that AI systems heavily favor sources with clear, verifiable signals of authority, as these indicators reduce the risk of generating inaccurate information.

To amplify your content's discoverability, you must translate each component of E-E-A-T into a concrete signal. Demonstrating first-hand experience through original research or detailed case studies provides unique data that AI models value. Expertise is codified through detailed author biographies, linked credentials, and the implementation of Person schema, which directly connects content to a qualified individual. Authority is built through a strong citation network—both by earning links from other credible sites and by linking out to established research. Finally, trustworthiness is established through site-level transparency, including a comprehensive 'About Us' page, clear contact information, and regularly updated content that displays a "Last updated" date.

| E-E-A-T Component | Technical Signal for AI | Implementation Example |

|---|---|---|

| Experience | Unique, verifiable data | Publish original research, case studies with specific metrics, or product reviews with first-hand usage details. |

| Expertise | Author-level entity recognition | Implement Person schema on author pages that includes credentials, sameAs links to social profiles, and publications. |

| Authoritativeness | Citation and link graph analysis | Actively cite authoritative external sources (e.g., academic papers, government data) and build a strong backlink profile. |

| Trustworthiness | Site-level transparency signals | Maintain a detailed 'About Us' page with team bios, provide clear contact information, and use dateModified in your article schema. |

These signals are not merely best practices; they are essential data points for an AI seeking to validate its sources. The more verifiable proof of credibility you provide, the more likely an LLM is to trust, reference, and amplify your content in its own outputs.

To become a citable source for AI, you must strategically translate the abstract principles of E-E-A-T into concrete, machine-readable signals across your entire digital presence.

Content Architecture for Machine Readability

The formatting of your content is no longer just a matter of user experience; it is a direct technical instruction for AI crawlers. A logical, hierarchical structure is a foundational element that dictates how effectively an LLM can parse, interpret, and ultimately trust your information.

This strategic architecture enables AI models to deconstruct your content into understandable, citable components. By using clear headings, concise paragraphs, and structured data formats, you optimize your content for machine readability. This process transforms your articles from monolithic text into a scalable repository of extractable facts, making your insights prime for inclusion in AI-generated answers. As highlighted in analysis by Exploding Topics (2024), AI systems follow your heading structure like a roadmap to understand topic relationships and extract answers efficiently.

To engineer your content for machine comprehension, implement the following architectural principles:

- Implement a Strict Heading Hierarchy: Begin with a single, descriptive

<h1>that encapsulates the page's core topic. Follow this with a logical progression of<h2>and<h3>tags that break the subject matter into clear, sequential subtopics. This hierarchy is the primary navigation system for AI parsers. - Adopt a Question-Answer Framework: Structure your headings as direct questions that your target audience would ask. Immediately follow each heading with a concise, direct answer in the first sentence of the paragraph. This pattern directly mirrors the query-response model that LLMs are trained on.

- Prioritize Information with Front-Loading: Place the most critical information at the beginning of the article, each section, and every paragraph. LLMs often prioritize and extract content from the initial sentences of a text block, making this a crucial tactic for influencing what gets cited.

- Utilize Atomic Content Blocks: Keep paragraphs short, ideally two to three sentences, and focused on a single, self-contained idea. These atomic content blocks are easier for an AI to parse, validate, and repurpose without losing essential context, amplifying their utility for generative models.

This meticulous structuring can be simplified with dedicated content platforms, and tools like OutblogAI can help streamline this process, ensuring content is optimized for both humans and machines from the start.

A meticulously organized content architecture serves as a direct set of instructions, enabling AI to parse, trust, and cite your information with precision.

Building a Verifiable Entity: Your Off-Site Digital Footprint

Your website makes claims about your authority, but Large Language Models (LLMs) do not take your word for it. They act as digital investigators, seeking external verification across the entire web to build a trusted profile of your brand.

This investigative process requires a deliberate strategy for building a consistent and authoritative off-site digital footprint. LLMs cross-reference the information on your site with data from trusted, independent sources to validate your entity—who you are, what you do, and why you matter. An inconsistent or sparse presence creates ambiguity, which AI systems interpret as a lack of credibility. For instance, if your company name or address varies across different directories, an LLM cannot confidently identify you as a single, stable entity. This verification moves beyond simple backlinks to a more holistic assessment of your place within the digital ecosystem. A robust and aligned footprint across multiple high-quality platforms sends powerful signals of legitimacy, directly impacting how an AI engine perceives and represents your brand's expertise.

This approach is crucial, as LLMs increasingly rely on signals from Knowledge Graphs, Wikipedia entries, and high-authority publications to build a comprehensive understanding of an entity, a trend noted by industry analyses like those from LinkGraph (2024). Exploding Topics (2024) further highlights the value LLMs place on sources like Reddit for understanding genuine user sentiment and natural language context, which adds another layer to this verification process.

Strategic Insight: The objective is not just to be mentioned, but to be mentioned consistently and in context. An LLM connects the dots between a Wikipedia entry defining your company, a positive feature in an industry journal, and consistent NAP data in local directories to form a coherent, trustworthy entity profile.

Platforms like Wikipedia and Wikidata serve as foundational pillars for entity recognition because they are structured, collaborative, and widely trusted by AI systems as a source of truth. Similarly, consistent Name, Address, and Phone (NAP) information across business listings and directories acts as a unique identifier, removing ambiguity. Positive coverage in influential media outlets and active, helpful participation in industry forums like Reddit or Quora provide the qualitative proof of your authority and the social validation that LLMs are increasingly engineered to recognize and reward.

A cohesive and authoritative presence across diverse, high-trust platforms is the most strategic asset for cementing your brand's entity in the mind of an AI.

Measuring Success: New KPIs for an AI-First World

As generative AI reshapes the search landscape, a #1 ranking is becoming an unreliable measure of true visibility. Your content may be used to generate an answer, but if your brand isn't cited, that visibility fails to deliver business value.

Success in AI search requires a fundamental shift in measurement. We must move beyond positional tracking to evaluate the frequency, quality, and sentiment of brand and content citations within AI-generated answers. This new framework focuses on influence and authority, tracking how often your expertise is the foundation for an AI's response and how you are portrayed alongside other sources.

This evolution is supported by industry analysis, with experts like Makarenko Roman (writing in Medium, 2023) highlighting that brand mention frequency is effectively the new ranking signal in an LLM-driven ecosystem. To quantify performance, marketers must adopt a new dashboard of metrics.

| KPI Category | Traditional SEO Focus | AI Search Focus |

|---|---|---|

| Visibility | Keyword Rankings (Position 1-10) | Share of Voice & Citation Frequency |

| Authority | Backlink Profile (Domain Authority) | Co-citation Analysis (Cited alongside whom?) |

| Performance | Organic Clicks & CTR | Referral Traffic from AI Platforms |

| Perception | On-page Brand Mentions | Sentiment Analysis in AI Responses |

To demonstrate ROI, monitor referral traffic from sources like chat.openai.com and Perplexity AI in your analytics platforms. This traffic often consists of high-intent users who arrive with pre-qualified context, leading to higher engagement and conversion rates. Tracking your share of voice within answers to key conversational queries provides a direct measure of your brand’s authority and market penetration in this new channel. Specialized tools are also emerging to track this visibility across major LLM platforms, simplifying reporting.

To prove the ROI of AI search optimization, focus on tracking the quality of AI-driven referral traffic and your share of voice within generative answers, not just your position on a results page.

The shift to AI-powered search is the present reality. As Large Language Models redefine how information is discovered, the imperative for technical SEO has been amplified. This guide has illuminated the strategic pathways to ensure your content is not just found, but intelligently understood and verifiably leveraged by AI.

Here are the critical takeaways to solidify your position and drive discoverability in this transformative era:

- The Paradigm Shift Demands Action: Success in AI Search pivots from traditional page rankings to achieving AI visibility through passage-level understanding and citation frequency. This mandates a proactive evolution of your SEO framework.

- Technical SEO is Your LLM Gateway: Foundational technical readiness—encompassing site speed, crawlability, and mobile-friendliness—combined with precise structured data (Schema) is non-negotiable for communicating context directly to LLMs.

- Build Trust and Authority for AI: Establishing robust E-E-A-T signals and intelligent content architecture enables LLMs to recognize your brand as a verifiable, authoritative source, amplifying trust and relevance.

Embracing these advancements is a strategic maneuver to achieve enduring digital authority. Long-term digital authority requires a strategic commitment to the technical precision and verifiable trust that AI systems reward.